Can You Believe it?? Even More on Rankings

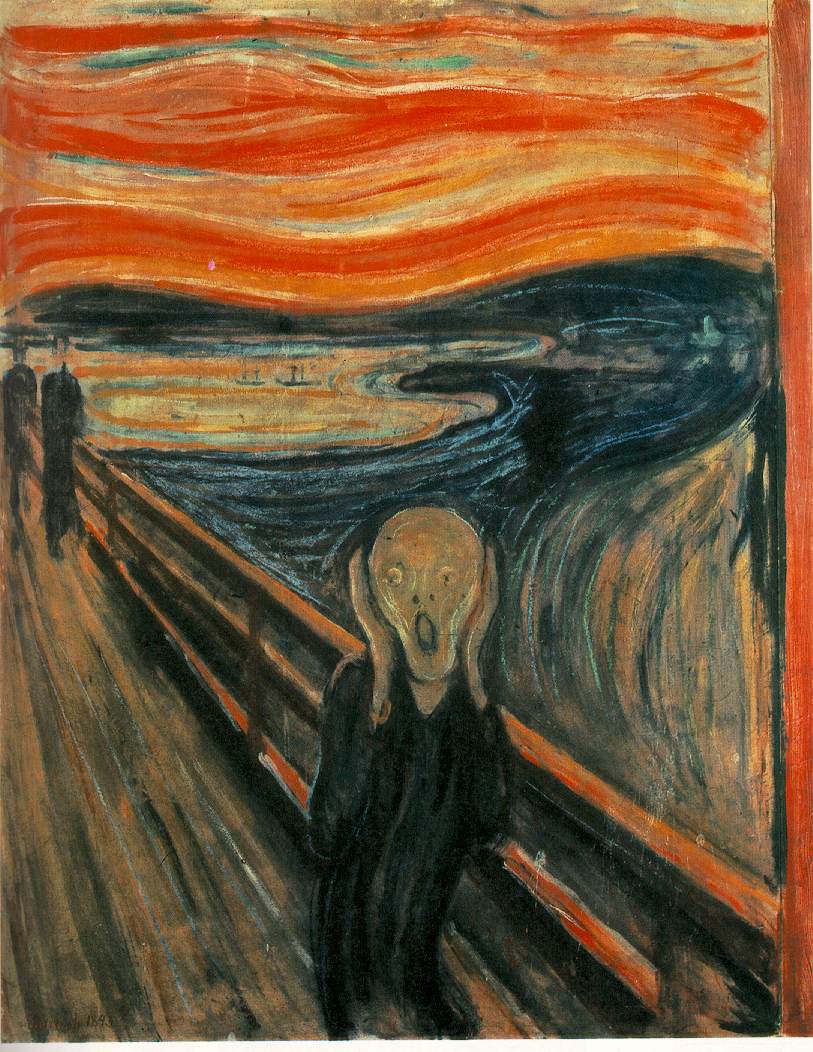

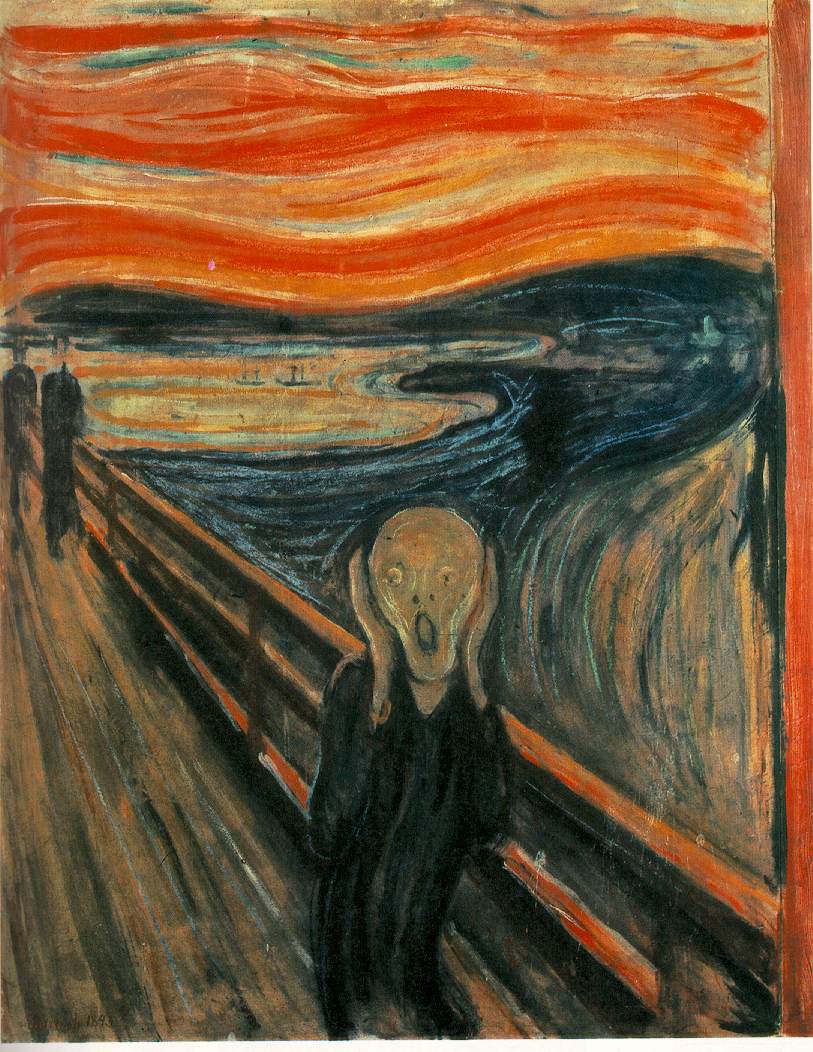

I have avoiding much of the ranking controversy since law school deans and faculty started becoming dogs to USNWR’s tail. Sometimes they (rankings and deans) make me want to run screaming from the room, at least figuratively. We even had a guy on my faculty who would email all of us if we moved up or down a slot in whatever ranking he ran across while doing his early morning surfing. It was like getting tips from your bookie. Our tax department basks in being number two while I imagine our dean prepares for our drop since we will now be reporting all entering students instead of half of them. Generally people find some reason to think the ranking that puts them highest actually is pretty good. So we hear about SSNR downloads from some, USNWR from others and so on. The truth, as Nancy Rapoport reminds us, is that once you are outside the top 15 or so law schools we are pretty much the same. And, we all want to be higher but are unwilling to do what it takes to actually be ranked higher. That would mean tougher tenure and especially post tenure standards, reduced teaching for writers with the slack taken up my non writers, and discontinuing some programs that exist because law professors are in a never ending process of log-rolling and out-whimping each other.

Part of the problem is deciding the relevant consumer of ranking information. For example, in the case of potential students law school is a human capital investment. Some combination of bar passage rate, future income, discounted to present value, and compared to tuition would do the trick for many. Presumably that would capture at least some element of scholarship and teaching effectiveness as well. The model might be refined by accounting for GPAs and LSATs of entering student in order to assess which school can do the most with the “least.” This may not be right for the public service oriented student. This calls for a ranking based on placement with public service oriented employers.

Part of the problem is deciding the relevant consumer of ranking information. For example, in the case of potential students law school is a human capital investment. Some combination of bar passage rate, future income, discounted to present value, and compared to tuition would do the trick for many. Presumably that would capture at least some element of scholarship and teaching effectiveness as well. The model might be refined by accounting for GPAs and LSATs of entering student in order to assess which school can do the most with the “least.” This may not be right for the public service oriented student. This calls for a ranking based on placement with public service oriented employers.

If the consumers are the public more generally (and why not since they pay the salaries of most of us) the ranking would account for the “public goods” produced by attorneys and law professors. I have no idea now to get at the first factor especially since the students tend to arrive with public or personal interest goals already in place but an indirect approach might be to assess schools by the number of graduates involved in some kind of disciplinary problem. Would it be fair to say that schools with relatively high graduate involvement in disciplinary problem are probably not emphasizing the importance of ethics and public service?

For these consumers -- the public -- scholarship only matters if it makes a difference. Counting downloads or number of articles for them is a little like the falling tree in the forest question. If you write it an no one cites it or relies on it, have you written anything at all? In this regard a ranking akin to John Doyle’s but with the focus on law schools, not reviews, would seem to make sense. Perhaps this has been done. I think it may have and would look for it but if I spend one more minute thinking and writing about rankings I sincerely hope my dean will fire me.

Finally, (don't get your hopes up, this is less than a minute) why don’t we take the law and economics approach. Let’s just ask everyone we see: Please rank the top 50 law schools. They will, in a sense, reveal their preferences no matter how ill-informed. What else matters?

Part of the problem is deciding the relevant consumer of ranking information. For example, in the case of potential students law school is a human capital investment. Some combination of bar passage rate, future income, discounted to present value, and compared to tuition would do the trick for many. Presumably that would capture at least some element of scholarship and teaching effectiveness as well. The model might be refined by accounting for GPAs and LSATs of entering student in order to assess which school can do the most with the “least.” This may not be right for the public service oriented student. This calls for a ranking based on placement with public service oriented employers.

Part of the problem is deciding the relevant consumer of ranking information. For example, in the case of potential students law school is a human capital investment. Some combination of bar passage rate, future income, discounted to present value, and compared to tuition would do the trick for many. Presumably that would capture at least some element of scholarship and teaching effectiveness as well. The model might be refined by accounting for GPAs and LSATs of entering student in order to assess which school can do the most with the “least.” This may not be right for the public service oriented student. This calls for a ranking based on placement with public service oriented employers.If the consumers are the public more generally (and why not since they pay the salaries of most of us) the ranking would account for the “public goods” produced by attorneys and law professors. I have no idea now to get at the first factor especially since the students tend to arrive with public or personal interest goals already in place but an indirect approach might be to assess schools by the number of graduates involved in some kind of disciplinary problem. Would it be fair to say that schools with relatively high graduate involvement in disciplinary problem are probably not emphasizing the importance of ethics and public service?

For these consumers -- the public -- scholarship only matters if it makes a difference. Counting downloads or number of articles for them is a little like the falling tree in the forest question. If you write it an no one cites it or relies on it, have you written anything at all? In this regard a ranking akin to John Doyle’s but with the focus on law schools, not reviews, would seem to make sense. Perhaps this has been done. I think it may have and would look for it but if I spend one more minute thinking and writing about rankings I sincerely hope my dean will fire me.

Finally, (don't get your hopes up, this is less than a minute) why don’t we take the law and economics approach. Let’s just ask everyone we see: Please rank the top 50 law schools. They will, in a sense, reveal their preferences no matter how ill-informed. What else matters?

2 Comments:

discontinuing some programs that exist because law professors are in a never ending process of log-rolling and out-whimping each other.

I know this is your pet issue, but how does your suggestion raise rankings? It seems to me that glossy brochures about programs can both increase spending per student, lead to increased fundraising, and increase reputation.

Even accepting your view that this is a waste of money and not good for students, I don't see that translating to higher rankings.

I think you make a great point about the writing though. The irony of taking steps to emphasize the writing is that it does indeed improve reputation, but does not improve teaching, which is the core thing students are looking for - assuming they are the consumer of rankings.

Yea, I guess that is less than clear.

First let me clarify the pet issue statement. My pet issue is not the programs per se but the processes through which they are established and then the unwillingless and inability of those with vested interests to examine them to see if they really were in the best interests of the schools.

On the matter of spending, I did not mean to stop spending on those programs and do nothing else with the money. My thought had two steps. First, I know of no rating system that examines schools to see how many special domestic or international programs they offer students. Second, if the money were taken from those programs and invested in increasing scholarship by faculty and offering scholarships to high LSAT/GPA students, rankings would go up.

I am just not convinced that glossy brochures about those sorts of programs increase the willingness of donors write checks.

Post a Comment

<< Home